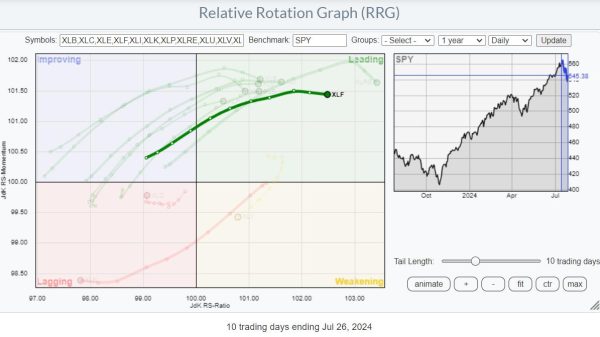

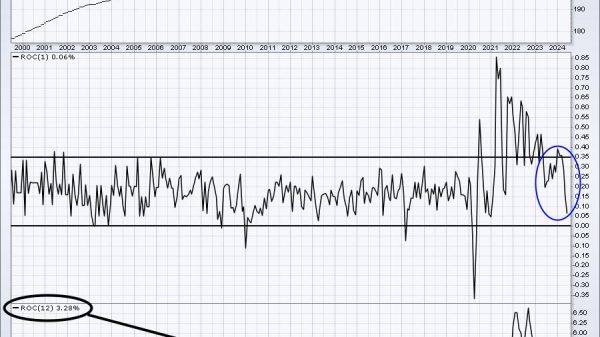

Microsoft announced a new version of its small language model, Phi-3, which can look at images and tell you what’s in them.

Phi-3-vision is a multimodal model — aka it can read both text and images — and is best used on mobile devices. Microsoft says Phi-3-vision, now available on preview, is a 4.2 billion parameter model (parameters refer to how complex a model is and how much of its training it understands) that can do general visual reasoning tasks like asking questions about charts or images.

But Phi-3-vision is far smaller than other image-focused AI models like OpenAI’s DALL-E or Stability AI’s Stable Diffusion. Unlike those models, Phi-3-vision doesn’t generate images, but it can understand what’s in an image and analyze it for a…

In this article: